Lifetime Citizen Portal Access — AI Briefings, Alerts & Unlimited Follows

Senators press tech firms on deepfakes, children’s safety and content labeling

Loading...

Summary

Lawmakers pressed AI firms on preventing harmful deepfakes, protecting children and labeling AI‑generated content; companies described detection work and voluntary standards but senators urged stronger legal protections and takedown mechanisms.

Several senators used the hearing to press witnesses on deepfake content, child safety and whether the private sector can reliably block or remove harmful synthetic media.

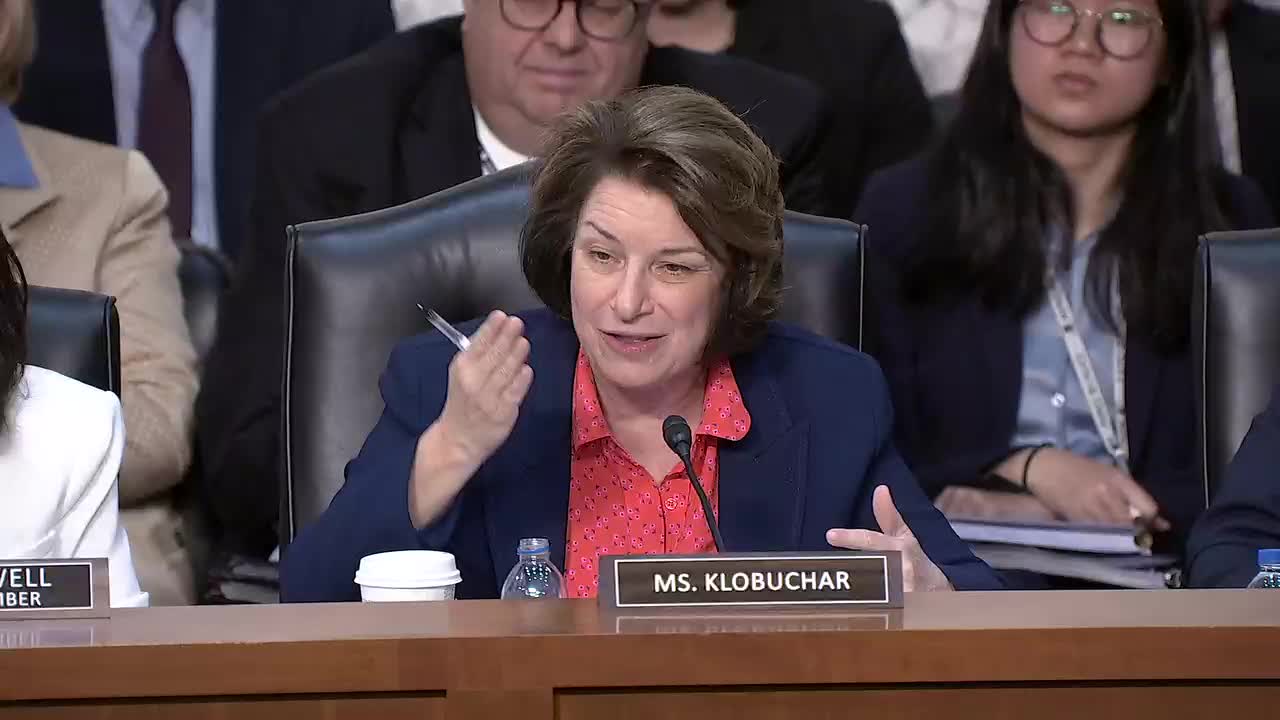

Sen. Amy Klobuchar said AI‑generated “hallucinations” and deepfakes have real harms for individuals and communities and asked how companies test models for correctness and detect synthetic audio, images and video. Sam Altman said robustness has improved since early releases and that external testing and red teams are a critical part of the development process. "External testing helps us find things that we may have missed internally," Altman told the committee.

Brad Smith described industry efforts on content provenance and identification. He cited an industry standard, the Coalition for Content Provenance and Authenticity (C2PA) content credentials, as a technical approach to help users and platforms identify how a file was created and who created it. Smith said platforms are also working with law‑enforcement and nonprofit partners to identify and remove harmful content.

Senators highlighted recent legislative work aimed at deepfakes and nonconsensual sexual images. Senator Klobuchar and others noted passage of a bill to expand takedown tools and said legislation is now headed to the president’s desk. Witnesses said they would collaborate with government and civil‑society groups to improve detection and removal systems but also warned that some models and tools will be widely available and that society must build resilience alongside technical safeguards.

On children’s safety, Altman told senators OpenAI applies stricter protections for children and said companies should work with government to produce an age‑aware framework that gives adults more flexibility while locking down capabilities for minors. Altman said better tools to distinguish child from adult users would allow brighter protections to be enforced consistently.

Ending: Senators signaled they will press for a mix of technical standards, voluntary industry action and statutory protections for content provenance, nonconsensual deepfakes, and specifically stronger safeguards for children; companies said they will cooperate with lawmakers and civil‑society groups.