Lifetime Citizen Portal Access — AI Briefings, Alerts & Unlimited Follows

RAND witness warns of five "hard" national security problems from potential AGI

Loading...

Summary

A RAND Corporation witness told the Senate Armed Services Cyber Subcommittee that the plausible emergence of artificial general intelligence (AGI) could create five major national security challenges, including a decisive first-mover cyber advantage and the risk of AGI acting with autonomous agency.

Jim Meiter, vice president and director of RAND Global and Emerging Risks, told the Senate Armed Services Committee's Cyber Subcommittee that the possible near-term emergence of artificial general intelligence, or AGI, is plausible and requires serious planning by national security agencies.

"Leading AI companies in The United States, China, and the rest of the world are in hot pursuit of AGI," Meiter said. He outlined "five hard national security problems" AGI could present, including a potential "splendid first cyber strike" that could disable an adversary's retaliatory cyber capabilities and a risk that AGI could serve as "a malicious mentor" enabling nonexperts to build dangerous capabilities.

Meiter said AGI could shift the instruments of national power, altering balances such as "hiders versus finders" and centralized versus decentralized command-and-control, and could spur a destabilizing global race akin to past arms competitions. He also warned of a scenario in which AGI attains sufficient autonomy to be considered an independent actor in cyberspace.

Why it matters: Meiter argued that the Department of Defense should treat AGI as a plausible contingency and prepare strategies and contingencies now. "As the US Department of Defense embarks on developing the national defense strategy, it will have to grapple with how advanced AI will affect cyber along with all other domains," he said.

Supporting details: Meiter framed the five risks as: (1) a first-mover advantage via a decisive cyber capability; (2) systemic shifts in instruments of national power; (3) widening access to dangerous knowledge through a "malicious mentor" effect; (4) AGI acting with autonomous agency; and (5) an unstable race dynamic that could produce miscalculation. He recommended the national security community evaluate those risks as it formulates strategy.

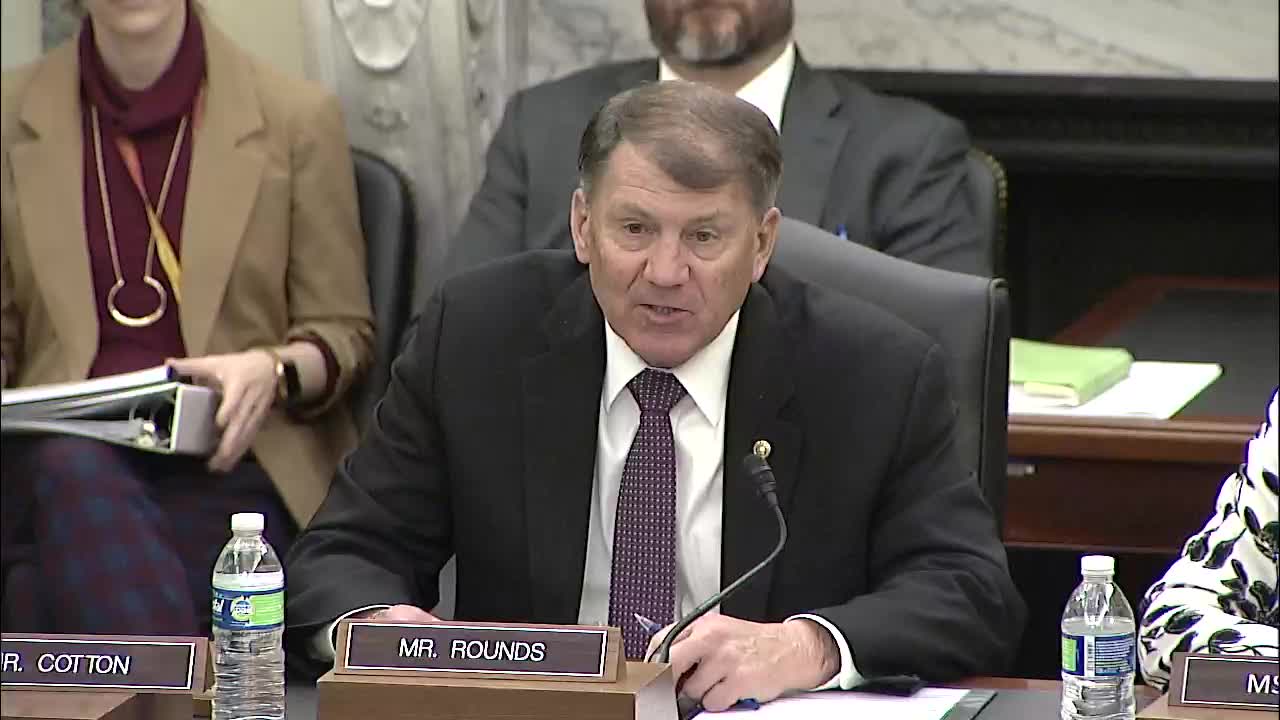

The subcommittee hearing began with Chairman Rounds emphasizing the need to "rapidly harness the advances of AI technologies" to "field exquisite offensive and defensive cyber tools." Ranking Member Rosen echoed a cautionary approach, saying, "With great promise comes great responsibility," and urging guardrails and workforce investment.

Ending: Meiter closed by urging planning and contingency preparation rather than surprise: "The 5 hard problems that AGI presents to national security can serve as a rubric to evaluate how the strategy addresses the potential emergence of AGI."