Get Full Government Meeting Transcripts, Videos, & Alerts Forever!

At Senate hearing, debate sharpens on open-source AI vs. controlled APIs and platform responsibilities

Summary

Witnesses and senators debated whether foundational AI models should be open-source or distributed under controlled APIs to balance rapid diffusion and misuse mitigation; Meta described limited, vetted release practices for Llama 2.

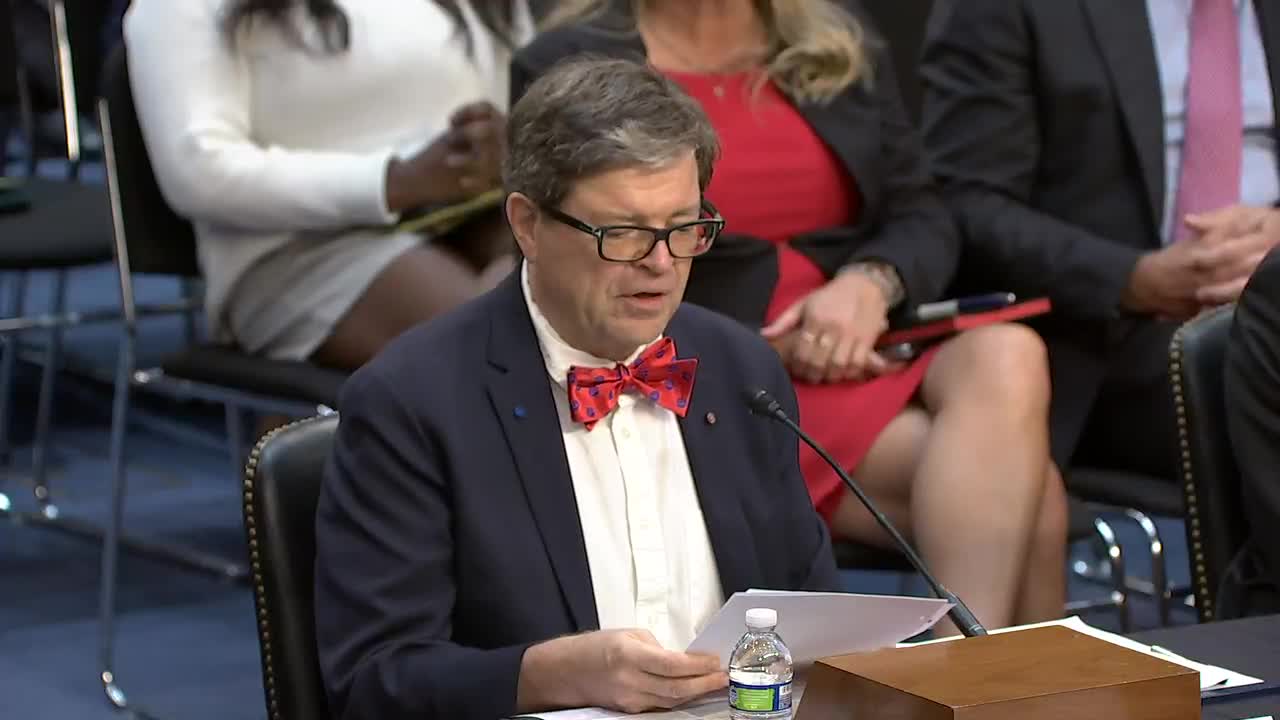

A key policy disagreement at the hearing centered on whether foundational AI models should be openly released or accessed through controlled interfaces. Dr. Yann LeCun, Meta’s chief AI scientist, argued open-source foundational models accelerate innovation and democratic values by enabling broad research and customized applications. "An open source basic model should be the foundation on which industry can build a vibrant ecosystem," LeCun said.

Other panelists and senators urged…

Already have an account? Log in

Subscribe to keep reading

Unlock the rest of this article — and every article on Citizen Portal.

- Unlimited articles

- AI-powered breakdowns of topics, speakers, decisions, and budgets

- Instant alerts when your location has a new meeting

- Follow topics and more locations

- 1,000 AI Insights / month, plus AI Chat