Get Full Government Meeting Transcripts, Videos, & Alerts Forever!

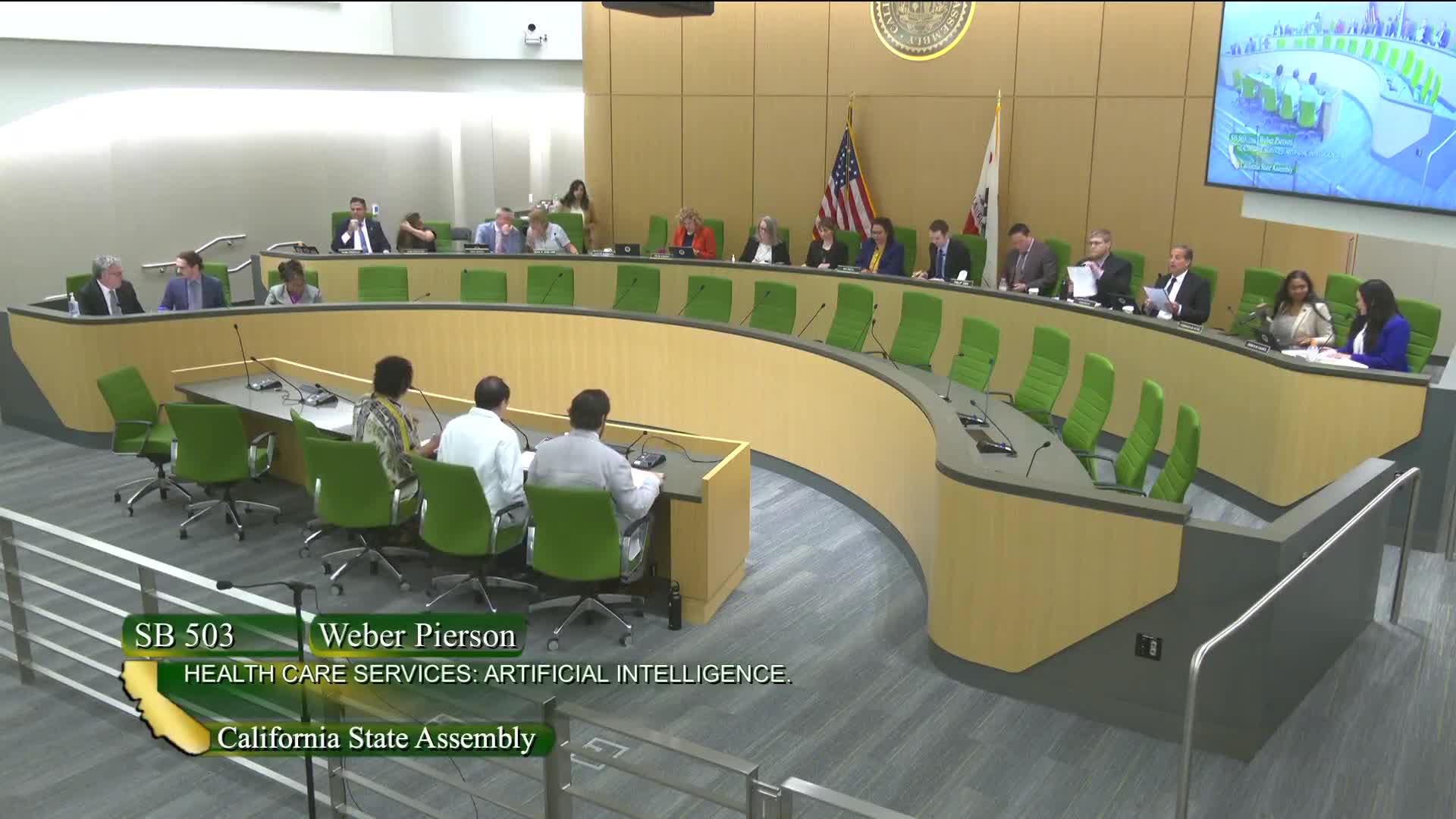

Committee advances SB 503 to require bias identification and monitoring for AI used in clinical decision making

Summary

SB 503 would require specified AI tools used for clinical decision support or health-resource allocation in health care facilities to be identified, mitigated for biased impacts, and regularly monitored. The committee voted the bill out to privacy and consumer protection with amendments.

SB 503, authored by Senator Weber Pearson and described to the Assembly Health Committee on July 8, would require developers and deployers of certain artificial intelligence tools used in clinical decision-making or health-care resource allocation to take steps to identify, mitigate and monitor biased impacts.

"AI tools do not operate in a vacuum," Weber Pearson told the committee, adding that bias can be embedded at each step of tool development and deployment and that bias in health care algorithms can worsen existing disparities. Physician and organizational…

Already have an account? Log in

Subscribe to keep reading

Unlock the rest of this article — and every article on Citizen Portal.

- Unlimited articles

- AI-powered breakdowns of topics, speakers, decisions, and budgets

- Instant alerts when your location has a new meeting

- Follow topics and more locations

- 1,000 AI Insights / month, plus AI Chat