Get AI Briefings, Transcripts & Alerts on Local & National Government Meetings — Forever.

Experts at NIST panel urge transparency and caution in using likelihood ratios for forensic testimony

Summary

NIST-affiliated statisticians and an Innocence Project attorney debated whether likelihood ratios should be used in court, stressing model uncertainty, the need for robust data and disclosure, and warning that courts may exclude or undermine such evidence without transparency.

At a forensic-science panel, statisticians affiliated with the National Institute of Standards and Technology and Chris Fabricant of the Innocence Project outlined competing technical and legal challenges to using likelihood ratios to express the weight of forensic evidence.

NIST-affiliated presenters argued that likelihood ratios — statistical measures often proposed as a way to express evidence strength — are sensitive to model choice and other sources of uncertainty. As one presenter put it, "the degree of variability, or the extent to which different reasonable models give different results, speaks to the reliability or the meaning of any 1 of those results." They said a single reported interval or number can understate the full scope of uncertainty unless experts explicitly consider a set of reasonable models and quantify variability across them.

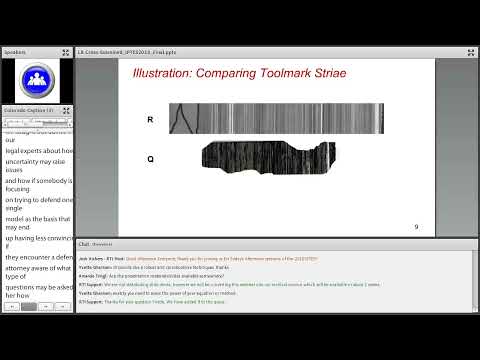

To illustrate model sensitivity, a presenter described a tool-mark example in which a measured score of six produced different probability estimates under two fitted models: "if the blue model gives you a likelihood ratio of 100, the red model will give you a likelihood ratio of 75," the presenter said. The statisticians argued that demonstrating narrow variability across reasonable models — and documenting which models were considered and why — is central to establishing that a probabilistic interpretation is "fit for purpose."

Chris Fabricant, an attorney who leads the Innocence Project's strategic litigation department, framed how courts and opposing counsel are likely to respond. He said defense lawyers (and prosecutors) will challenge likelihood ratios under Federal Rule of Evidence 702 and related gatekeeping doctrines such as Daubert, focusing on whether the underlying data and model choices are sufficient and transparent. "You can't just offer a likelihood ratio and walk away," Fabricant said, arguing that courts and litigants will demand robust discovery and an "opening of the box" to show the basis of knowledge behind a numeric value.

Fabricant warned that judges may also apply Federal Rule of Evidence 403 — excluding evidence when its prejudicial or confusing effect outweighs its probative value — if jurors are likely to misunderstand complex probabilistic testimony. He urged practitioners to expect cross-examination that presses experts to explain why they selected a particular model, what alternative models produce, and how substrate, data selection, and thresholds influenced the result.

Panelists agreed on practical steps to reduce risk: collect and disclose larger, fit-for-purpose databases; test multiple reasonable models and report the resulting variability; and be transparent about subjective choices embedded in any probabilistic approach. The presenters and Fabricant emphasized that these measures are not a blanket rejection of likelihood ratios but conditions under which such evidence could be more reliable and defensible in court.

The panel concluded without any formal action. Presenters recommended further research, improved validation datasets, and clearer guidance for communicating probabilistic evidence to lay fact-finders.