Get AI Briefings, Transcripts & Alerts on Local & National Government Meetings — Forever.

AGO warns AI is making imposter scams and fake charities harder to detect

Loading...

Summary

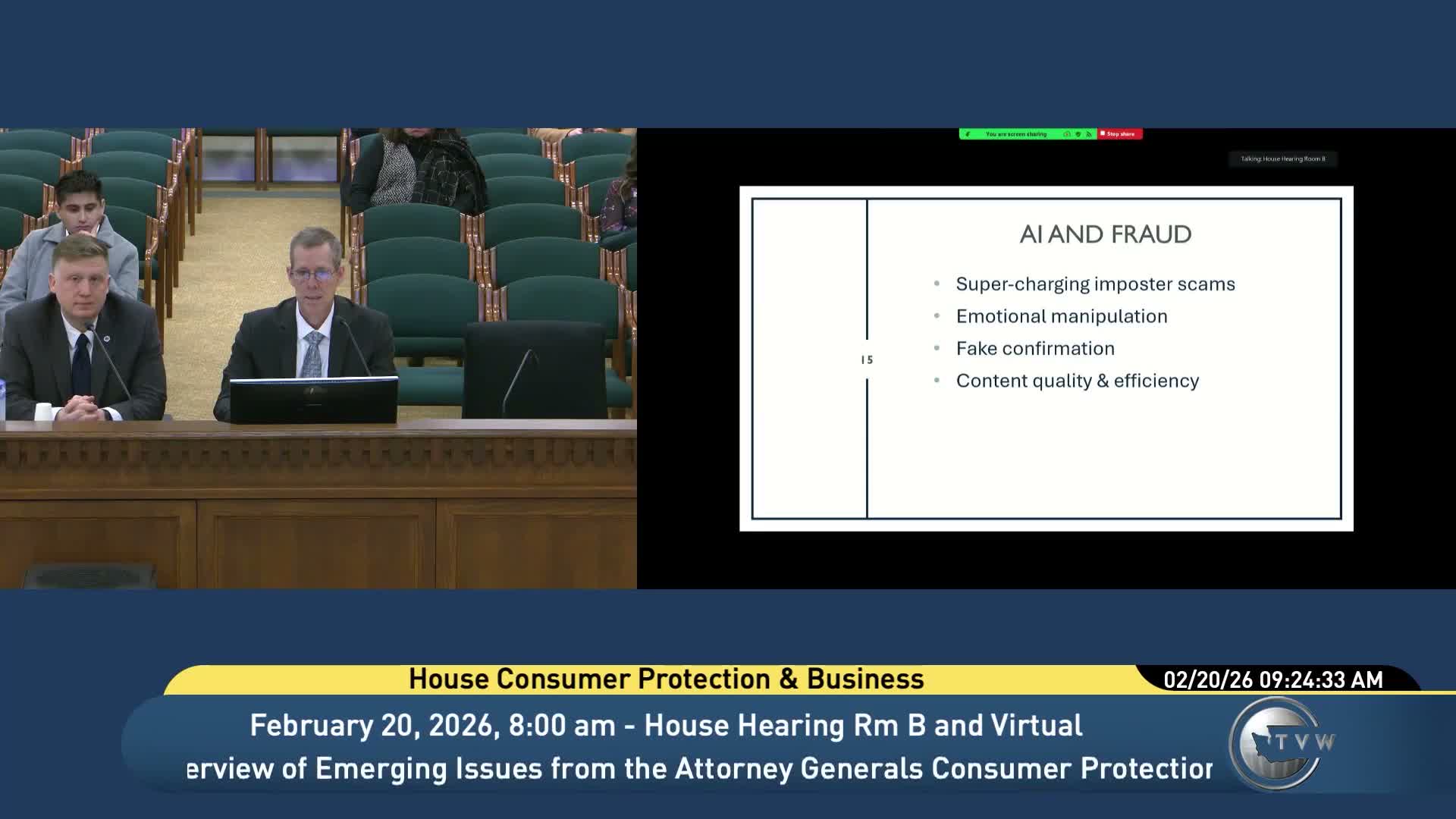

Attorney General consumer protection staff told lawmakers that readily available AI tools — deepfakes, voice cloning and autonomous agents — are making imposter scams more convincing and can be used to create fake organizations, complicating detection and enforcement.

Attorney General staff told a state committee Feb. 20 that artificial intelligence has rapidly changed scammers' capabilities, making imposter scams harder to spot and enabling automated, large‑scale fraud.

"Deep fake videos, voice cloning, and AI agents are already being used to perpetrate imposter scams," Sean Colgan said, describing technology that can produce convincing video and audio and noting tools that can create entire fake online ecosystems, including websites and fake employee profiles.

Colgan and Joshua Steeter told the Consumer Protection and Business Committee that AI can be used to set up fake charities, open bank accounts and even to hire lawyers — describing a U.K. example where an AI‑created entity interacted with regulators without ever meeting a human. Steeter said similar patterns are plausible in Washington given the ability to create registrants online without human verification.

Why it matters: The presenters said detection becomes harder because past cues — grammar errors, sloppy visuals — are gone. Colgan warned that bots or a hybrid human‑bot approach can generate convincing, error‑free communications at scale and adapt in real time, increasing the speed and effectiveness of scams.

Legal and policy questions: Committee members asked whether current statutes allow the AGO to dissolve nonprofits created by AI and who would be liable (developer, deployer or operator). Steeter said the Nonprofit Corporation Act likely permits dissolution where a nonprofit was created by fraud, but business corporation law may not; he and Colgan said courts and statutes may need updating and that detection remains a threshold problem.

What’s next: Presenters encouraged policymakers to consider human‑identification checks when registering entities, labeling requirements for AI‑modified media and cross‑agency work with the Secretary of State and federal partners. The AGO said it will follow up with the committee on the AG task force on AI and coordinate with regulatory partners.